3D Game Rendering 101

You lot're playing the latest Call of Mario: Deathduty Battleyard on your perfect gaming PC. You're looking at a cute 4K ultra widescreen monitor, admiring the glorious scenery and intricate item. Ever wondered just how those graphics got there? Curious virtually what the game made your PC practise to make them?

Welcome to our 101 in 3D game rendering: a beginner's guide to how one basic frame of gaming goodness is made.

Each twelvemonth, hundreds of new games are released around the globe -- some are designed for mobile phones, some for consoles, some for PCs. The range of formats and genres covered is just as comprehensive, but in that location is one type that is possibly explored past game developers more than whatsoever other kind: 3D. The kickoff e'er of its ilk is somewhat open to debate and a quick scan of the Guinness Globe Records database produces diverse answers. We could pick Knight Lore past Ultimate, launched in 1984, equally a worthy starter just the images created in that game were strictly speaking 2D -- no office of the data used is e'er truly 3 dimensional.

So if we're going to empathise how a 3D game of today makes its images, we need a different starting case: Winning Run by Namco, around 1988. It was maybe the first of its kind to work out everything in 3 dimensions from the start, using techniques that aren't a million miles abroad from what'south going on now. Of form, any game over xxx years quondam isn't going to truly exist the same as, say, Codemaster's F1 2022, but the bones scheme of doing it all isn't vastly unlike.

In this commodity, nosotros'll walk through the process a 3D game takes to produce a basic epitome for a monitor or TV to brandish. We'll start with the stop result and enquire ourselves: "what am I looking at?"

From there, we volition analyze each step performed to get that picture nosotros see. Forth the fashion, we'll cover peachy things like vertices and pixels, textures and passes, buffers and shading, as well every bit software and instructions. We'll also take a look at where the graphics carte du jour fits into all of this and why it's needed. With this 101, you'll look at your games and PC in a new light, and appreciate those graphics with a little more than admiration.

Aspects of a frame: pixels and colors

Permit's fire up a 3D game, so we have something to showtime with, and for no reason other than it'due south probably the well-nigh meme-worthy game of all time, we'll use Crytek's 2007 release Crysis. In the image beneath, we're looking a camera shot of the monitor displaying the game.

This picture is typically called a frame, but what exactly is it that we're looking at? Well, by using a photographic camera with a macro lens, rather than an in-game screenshot, we tin do a spot of CSI: TechSpot and need someone enhances information technology!

Unfortunately screen glare and background lighting is getting in the way of the image detail, only if we heighten it just a fleck more than...

We tin can see that the frame on the monitor is fabricated up of a grid of individually colored elements and if we expect really shut, the blocks themselves are built out of 3 smaller $.25. Each triplet is called a pixel (short for picture element) and the bulk of monitors paint them using three colors: cerise, green, and blueish (aka RGB). For every new frame displayed by the monitor, a list of thousands, if not millions, of RGB values need to be worked out and stored in a portion of retentiveness that the monitor can admission. Such blocks of retentivity are called buffers, so naturally the monitor is given the contents of something known as a frame buffer.

That'due south actually the cease point that we're starting with, so at present we demand to head to the beginning and go through the process to get there. The name rendering is frequently used to describe this only the reality is that it'south a long list of linked but separate stages, that are quite unlike to each other, in terms of what happens. Retrieve of it as being similar being a chef and making a meal worthy of a Michelin star restaurant: the cease consequence is a plate of tasty food, but much needs to exist done before you tin can constrict in. And simply like with cooking, rendering needs some basic ingredients.

The building blocks needed: models and textures

The fundamental edifice blocks to any 3D game are the visual assets that will populate the globe to be rendered. Movies, Telly shows, theatre productions and the like, all need actors, costumes, props, backdrops, lights - the list is pretty big. 3D games are no unlike and everything seen in a generated frame volition take been designed by artists and modellers. To assistance visualize this, let'south go one-time-school and take a look at a model from id Software's Quake II:

Launched over 20 years agone, Convulse II was a technological tour de forcefulness, although it'south fair to say that, like any 3D game 2 decades erstwhile, the models look somewhat blocky. But this allows u.s.a. to more easily see what this asset is made from.

In the first image, nosotros can see that the chunky fella is built out connected triangles - the corners of each are called vertices or vertex for one of them. Each vertex acts equally a point in infinite, and then will take at least three numbers to depict it, namely x,y,z-coordinates. Still, a 3D game needs more than this, and every vertex will have some additional values, such every bit the color of the vertex, the management it'south facing in (yes, points can't actually face up anywhere... just roll with it!), how shiny it is, whether it is translucent or non, and then on.

One specific set of values that vertices always have are to do with texture maps. These are a picture of the 'clothes' the model has to article of clothing, only since it is a flat paradigm, the map has to incorporate a view for every possible direction we may terminate upwardly looking at the model from. In our Convulse II example, we can see that it is just a pretty basic approach: forepart, dorsum, and sides (of the artillery). A modern 3D game will actually have multiple texture maps for the models, each packed full of item, with no wasted blank space in them; some of the maps won't expect like materials or feature, merely instead provide information near how light will bounce off the surface. Each vertex volition take a prepare of coordinates in the model's associated texture map, and so that it tin be 'stitched' on the vertex - this means that if the vertex is ever moved, the texture moves with it.

And then in a 3D rendered world, everything seen volition kickoff as a collection of vertices and texture maps. They are collated into memory buffers that link together -- a vertex buffer contains the information about the vertices; an alphabetize buffer tells united states how the vertices connect to form shapes; a resources buffer contains the textures and portions of memory prepare aside to be used later in the rendering procedure; a command buffer the listing of instructions of what to do with it all.

This all forms the required framework that volition be used to create the final grid of colored pixels. For some games, it can be a huge amount of data considering information technology would be very slow to recreate the buffers for every new frame. Games either store all of the information needed, to form the entire world that could potentially be viewed, in the buffers or store enough to cover a wide range of views, and so update information technology every bit required. For example, a racing game like F1 2022 volition accept everything in ane large drove of buffers, whereas an open world game, such as Bethesda's Skyrim, volition motion data in and out of the buffers, as the camera moves across the world.

Setting out the scene: The vertex stage

With all the visual data to paw, a game will then commence the process to get it visually displayed. To begin with, the scene starts in a default position, with models, lights, etc, all positioned in a bones style. This would exist frame 'zero' -- the starting point of the graphics and oft isn't displayed, merely candy to become things going. To help demonstrate what is going on with the get-go stage of the rendering process, we'll use an online tool at the Real-Time Rendering website. Allow's open with a very basic 'game': one cuboid on the ground.

This detail shape contains eight vertices, each ane described via a list of numbers, and between them they make a model comprising 12 triangles. Ane triangle or even ane whole object is known as a primitive. Equally these primitives are moved, rotated, and scaled, the numbers are run through a sequence of math operations and update accordingly.

Note that the model's bespeak numbers oasis't inverse, only the values that point where it is in the world. Roofing the math involved is beyond the scope of this 101, but the important part of this process is that information technology'due south all about moving everything to where it needs to be first. So, it'south time for a spot of coloring.

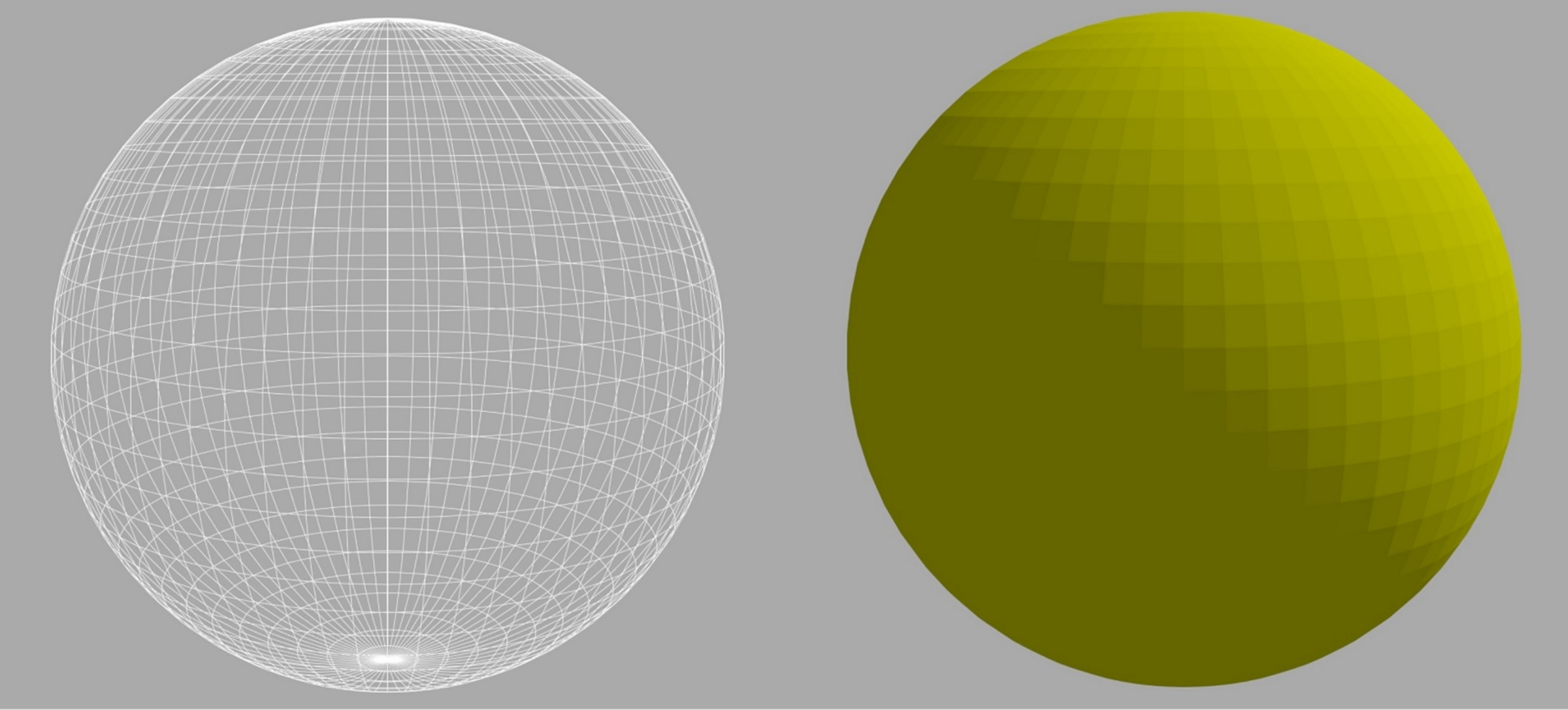

Allow'southward use a different model, with more than 10 times the corporeality of vertices the previous cuboid had. The most basic type of color processing takes the colour of each vertex and and so calculates how the surface of surface changes between them; this is known as interpolation.

Having more vertices in a model not only helps to have a more than realistic nugget, only it also produces better results with the color interpolation.

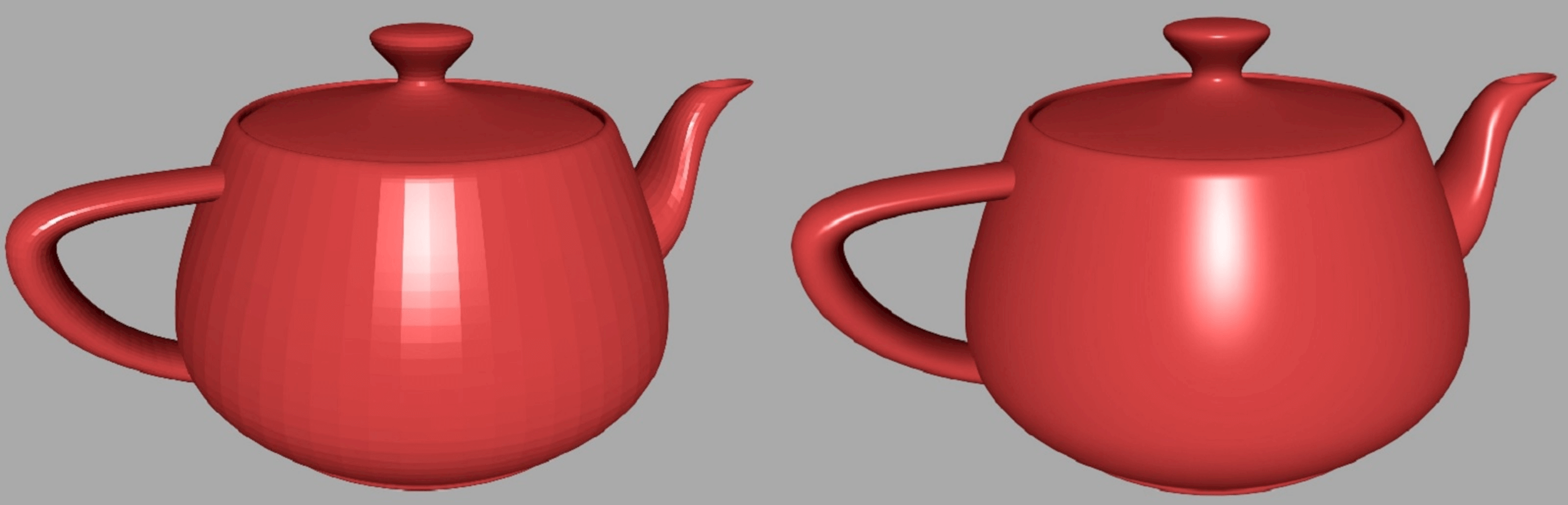

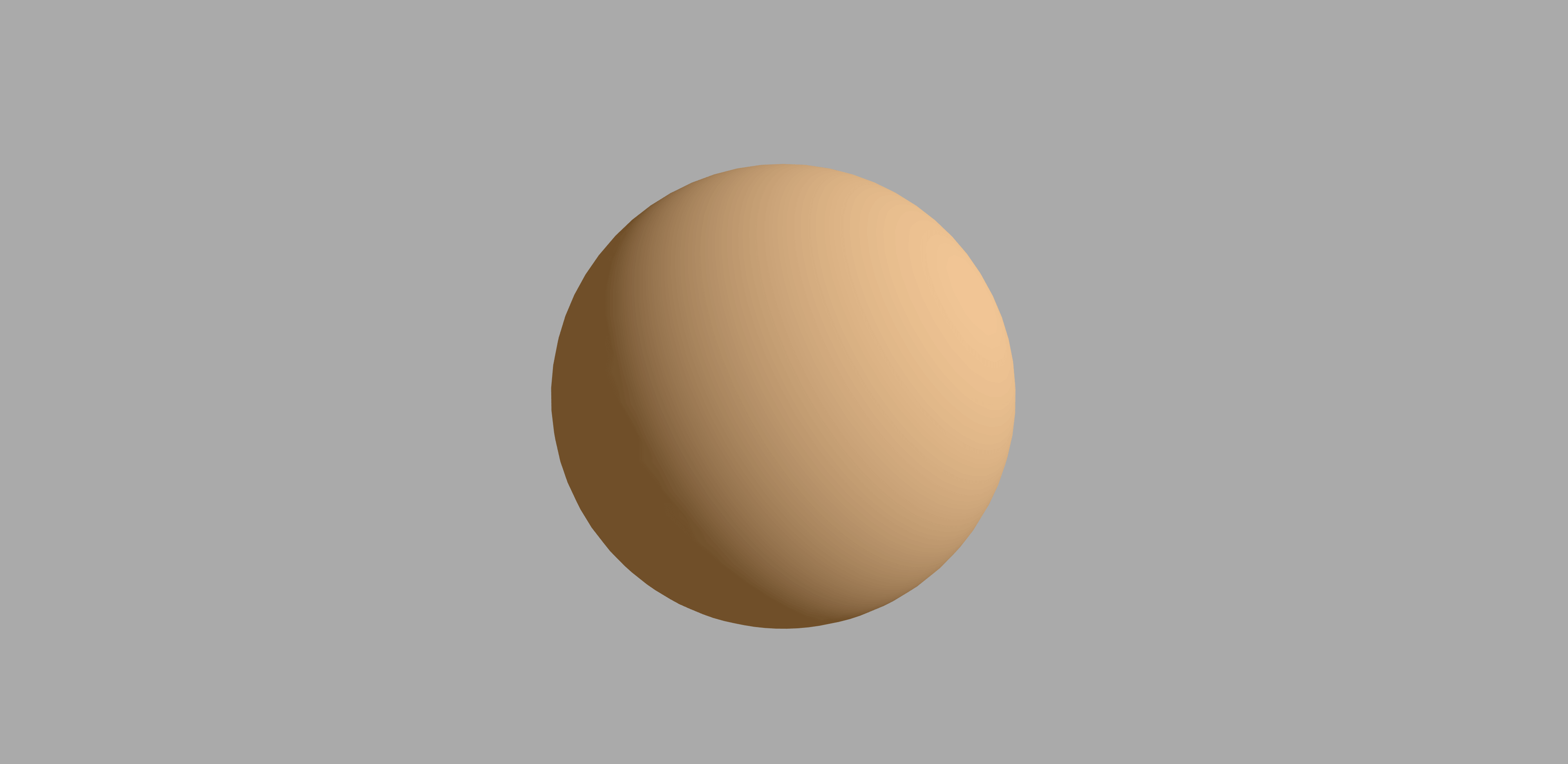

In this phase of the rendering sequence, the upshot of lights in the scene can be explored in detail; for instance, how the model'south materials reflect the light, tin be introduced. Such calculations need to take into account the position and management of the camera viewing the earth, too equally the position and direction of the lights.

There is a whole array of different math techniques that can be employed here; some simple, some very complicated. In the higher up image, we can see that the process on the right produces nicer looking and more realistic results only, not surprisingly, it takes longer to work out.

Information technology's worth noting at this bespeak that nosotros're looking at objects with a depression number of vertices compared to a cutting-border 3D game. Get back a bit in this commodity and look carefully at the image of Crysis: there is over a million triangles in that one scene lonely. Nosotros can become a visual sense of how many triangles are being pushed effectually in a modern game past using Unigine's Valley benchmark (download).

Every object in this epitome is modelled past vertices connected together, and then they make primitives consisting of triangles. The benchmark allows u.s.a. to run a wireframe manner that makes the program return the edges of each triangle with a bright white line.

The copse, plants, rocks, ground, mountains -- all of them congenital out of triangles, and every unmarried one of them has been calculated for its position, direction, and colour - all taking into account the position of the lite source, and the position and direction of the camera. All of the changes done to the vertices has to exist fed back to the game, so that information technology knows where everything is for the next frame to exist rendered; this is done by updating the vertex buffer.

Astonishingly though, this isn't the hard office of the rendering process and with the correct hardware, information technology'due south all finished in just a few thousandths of a 2nd! Onto the next stage.

Losing a dimension: Rasterization

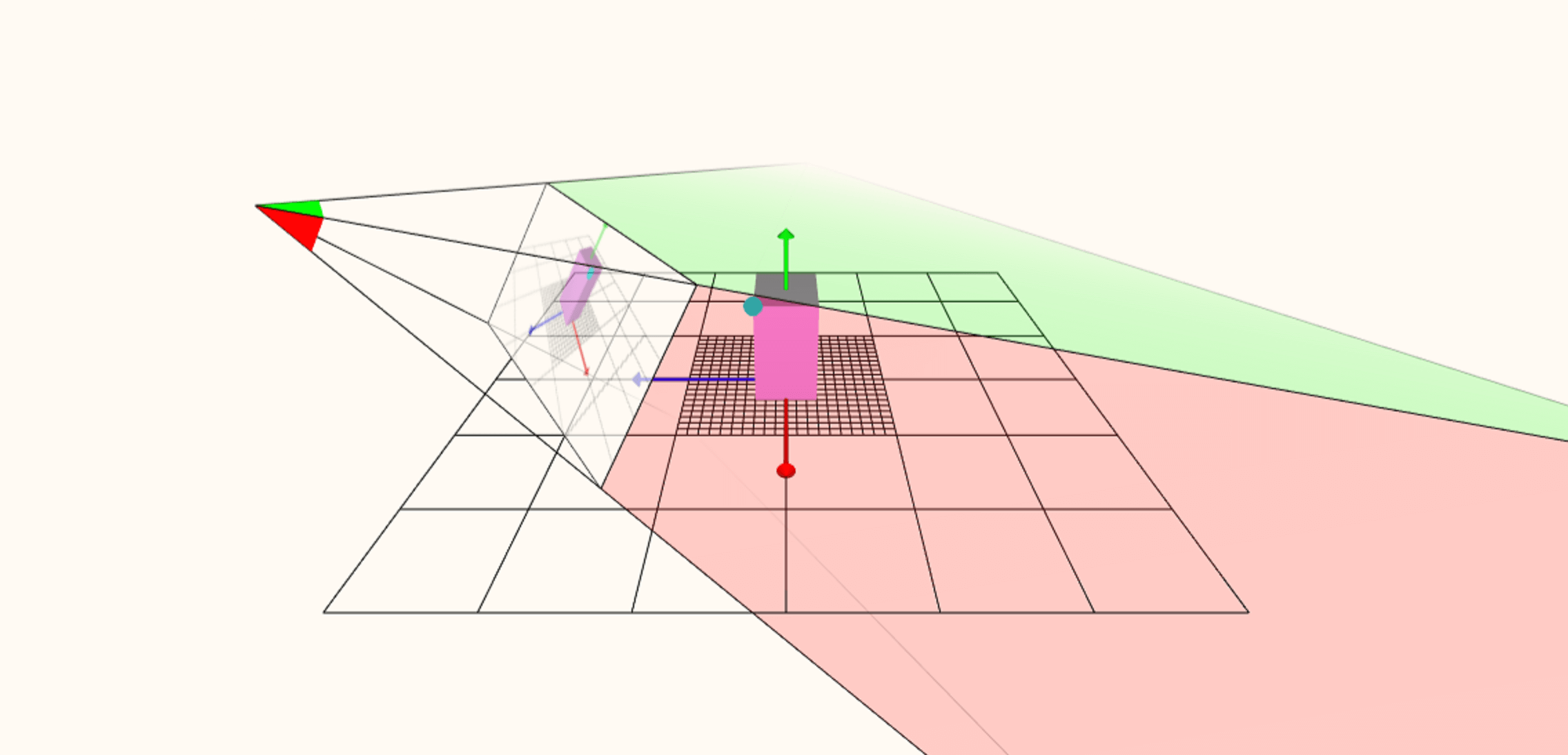

After all the vertices take been worked through and our 3D scene is finalized in terms of where everything is supposed to be, the rendering procedure moves onto a very significant stage. Up to at present, the game has been truly three dimensional but the last frame isn't - that ways a sequence of changes must have place to convert the viewed world from a 3D space containing thousands of connected points into a 2nd canvas of separate colored pixels. For virtually games, this process involves at to the lowest degree two steps: screen space project and rasterization.

Using the spider web rendering tool again, we can force it to show how the world volume is initially turned into a flat paradigm. The position of the camera, viewing the 3D scene, is at the far left; the lines extended from this point create what is chosen a frustum (kind of like a pyramid on its side) and everything inside the frustum could potentially announced in the terminal frame. A piffling mode into the frustum is the viewport - this is essentially what the monitor will show, and a whole stack of math is used to project everything within the frustum onto the viewport, from the perspective of the camera.

Even though the graphics on the viewport appear 2D, the data within is still actually 3D and this information is and so used to work out which primitives will be visible or overlap. This tin can be surprisingly difficult to practice because a primitive might cast a shadow in the game that can exist seen, even if the primitive can't. The removing of primitives is called culling and can make a significant difference to how quickly the whole frame is rendered. Once this has all been done - sorting the visible and not-visible primitives, binning triangles that lie outside of the frustum, and and so on -- the terminal stage of 3D is closed downward and the frame becomes fully 2D through rasterization.

The above image shows a very uncomplicated example of a frame containing one archaic. The grid that the frame's pixels make is compared to the edges of the shape underneath, and where they overlap, a pixel is marked for processing. Granted the stop effect in the example shown doesn't look much like the original triangle but that's because we're not using enough pixels. This has resulted in a problem called aliasing, although in that location are plenty of ways of dealing with this. This is why changing the resolution (the total number of pixels used in the frame) of a game has such a big affect on how it looks: not simply do the pixels better stand for the shape of the primitives but it reduces the impact of the unwanted aliasing.

Once this office of the rendering sequence is done, it's onto to the large one: the final coloring of all the pixels in the frame.

Bring in the lights: The pixel stage

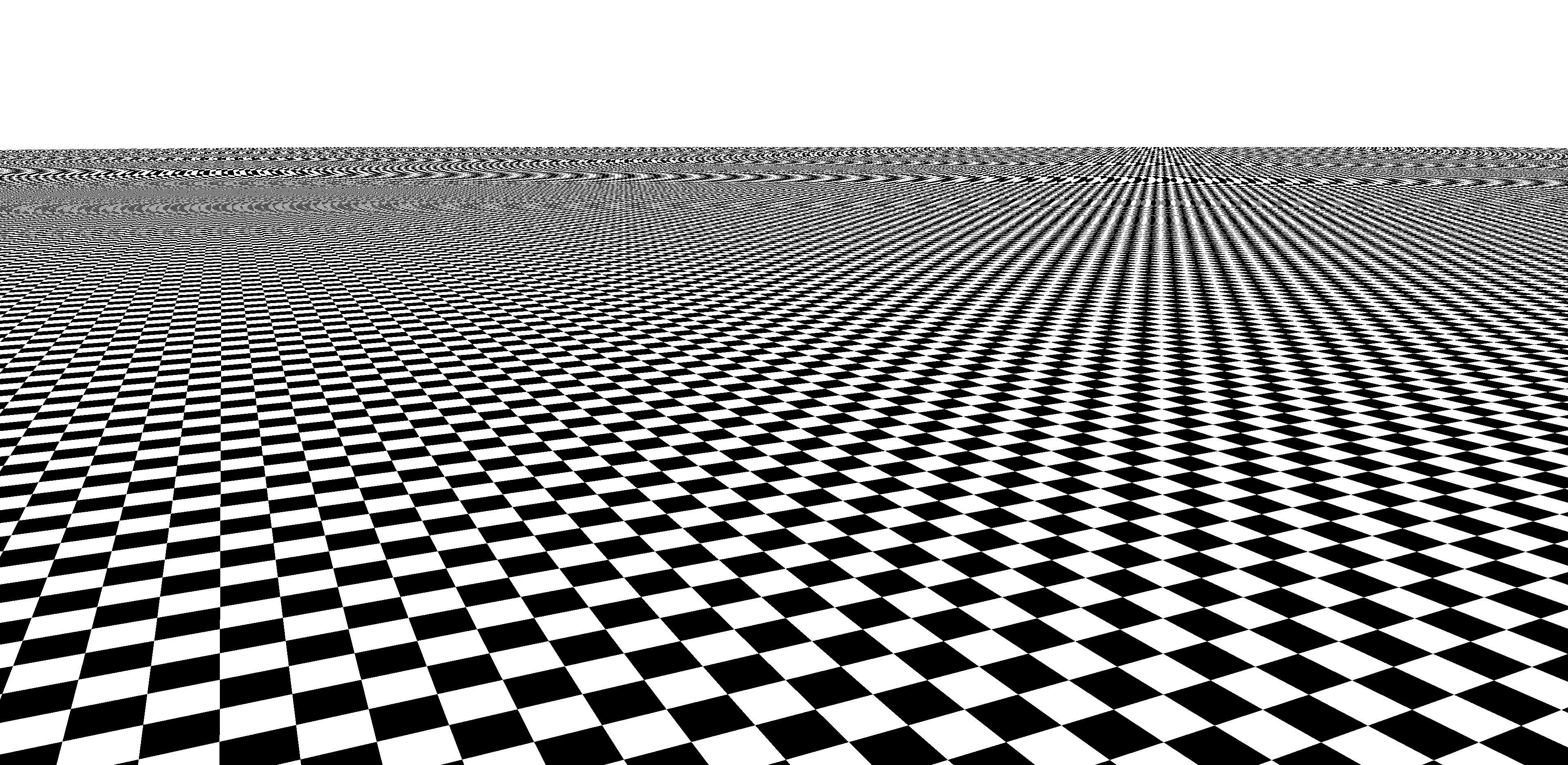

So now nosotros come to the most challenging of all the steps in the rendering chain. Years ago, this was nothing more than the wrapping of the model's clothes (aka the textures) onto the objects in the world, using the data in the pixels (originally from the vertices). The problem here is that while the textures and the frame are all 2D, the world to which they were fastened has been twisted, moved, and reshaped in the vertex stage. Yet more math is employed to account for this, but the results can generate some weird problems.

In this paradigm, a uncomplicated checker board texture map is being applied to a flat surface that stretches off into the distance. The result is a jarring mess, with aliasing rearing its ugly head again. The solution involves smaller versions of the texture maps (known as mipmaps), the repeated apply of data taken from these textures (called filtering), and even more than math, to bring it all together. The consequence of this is quite pronounced:

This used to exist really difficult work for any game to do but that's no longer the example, because the liberal use of other visual effects, such every bit reflections and shadows, means that the processing of the textures just becomes a relatively small part of the pixel processing stage. Playing games at higher resolutions likewise generates a higher workload in the rasterization and pixel stages of the rendering process, only has relatively petty touch on in the vertex stage. Although the initial coloring due to lights is done in the vertex stage, fancier lighting effects can as well be employed here.

In the in a higher place epitome, we can no longer hands run into the color changes between the triangles, giving us the impression that this is a shine, seamless object. In this particular case, the sphere is actually made up from the same number of triangles that we saw in the green sphere before in this article, but the pixel coloring routine gives the impression that information technology is has considerably more than triangles.

In lots of games, the pixel phase needs to be run a few times. For instance, a mirror or lake surface reflecting the world, equally it looks from the camera, needs to have the world rendered to begin with. Each run through is called a laissez passer and i frame tin easily involve iv or more passes to produce the final epitome.

Sometimes the vertex phase needs to be done over again, also, to redraw the earth from a dissimilar perspective and apply that view as office of the scene viewed past the game histrion. This requires the use of render targets -- buffers that human activity as the final store for the frame merely can exist used as textures in another pass.

To go a deeper understanding of the potential complexity of the pixel phase, read Adrian Courrèges' frame analysis of Doom 2022 and marvel at the incredible corporeality of steps required to brand a unmarried frame in that game.

All of this work on the frame needs to be saved to a buffer, whether equally a finished result or a temporary store, and in general, a game will have at least 2 buffers on the go for the final view: ane will exist "work in progress" and the other is either waiting for the monitor to access it or is in the process of existence displayed. There always needs to be a frame buffer available to return into, and so once they're all full, an action needs to take place to move things along and offset a fresh buffer. The last part in signing off a frame is a simple command (e.1000. nowadays) and with this, the final frame buffers are swapped about, the monitor gets the last frame rendered and the side by side ane can be started.

In this image, from Ubisoft'south Assassin's Creed Odyssey, we are looking at the contents of a finished frame buffer. Call back of it existence like a spreadsheet, with rows and columns of cells, containing nothing more than a number. These values are sent to the monitor or Telly in the form of an electric signal, and color of the screen's pixels are altered to the required values. Considering we can't do CSI: TechSpot with our eyes, nosotros see a apartment, continuous picture but our brains interpret it every bit having depth - i.e. 3D. One frame of gaming goodness, simply with then much going on behind the scenes (pardon the pun), it's worth having a wait at how programmers handle it all.

Managing the process: APIs and instructions

Figuring out how to make a game perform and manage all of this work (the math, vertices, textures, lights, buffers, you name it…) is a mammoth chore. Fortunately, there is help in the form of what is chosen an awarding programming interface or API for short.

APIs for rendering reduce the overall complication past offering structures, rules, and libraries of code, that allow programmers to use simplified instructions that are independent of any hardware involved. Pick whatever 3D game, released in past 3 years for the PC, and it volition take been created using ane of 3 famous APIs: Direct3D, OpenGL, or Vulkan. There are others, especially in the mobile scene, but we'll stick with these ones for this article.

While in that location are differences in terms of the diction of instructions and operations (eastward.k. a block of code to process pixels in DirectX is called a pixel shader; in Vulkan, it'south called a fragment shader), the cease result of the rendered frame isn't, or more rather, shouldn't exist different.

Where there will be a divergence comes to downwardly to what hardware is used to practice all the rendering. This is because the instructions issued using the API demand to be translated for the hardware to perform -- this is handled by the device'southward drivers and hardware manufacturers have to dedicate lots of resource and time to ensuring the drivers do the conversion as quickly and correctly as possible.

Let's use an earlier beta version of Croteam'south 2022 game The Talos Principle to demonstrate this, as it supports the 3 APIs we've mentioned. To amplify the differences that the combination of drivers and interfaces can sometimes produce, we ran the standard congenital-in criterion on maximum visual settings at a resolution of 1080p. The PC used ran at default clocks and sported an Intel Cadre i7-9700K, Nvidia Titan X (Pascal) and 32 GB of DDR4 RAM.

- DirectX 9 = 188.4 fps average

- DirectX 11 = 202.3 fps average

- OpenGL = 87.9 fps boilerplate

- Vulkan = 189.4 fps average

A total analysis of the implications behind these figures isn't within the aim of this commodity, and they certainly do non mean that one API is 'meliorate' than some other (this was a beta version, don't forget), so we'll go out matters with the remark that programming for different APIs present diverse challenges and, for the moment, in that location volition always be some variation in performance. Generally speaking, game developers volition choose the API they are most experienced in working with and optimize their lawmaking on that basis. Sometimes the word engine is used to describe the rendering code, just technically an engine is the full package that handles all of the aspects in a game, non but its graphics.

Creating a complete plan, from scratch, to render a 3D game is no simple thing, which is why and so many games today licence total systems from other developers (e.k. Unreal Engine); you can get a sense of the scale by viewing the open source engine for id Software'due south Quake and browse through the gl_draw.c file - this single item contains the instructions for various rendering operations performed in the game, and represents just a small part of the whole engine. Quake is over xx years old, and the entire game (including all of the avails, sounds, music, etc) is 55 MB in size; for contrast, Ubisoft's Far Cry 5 keeps just the shaders used by the game in a file that's 62 MB in size.

Time is everything: Using the correct hardware

Everything that nosotros have described so far can exist calculated and processed by the CPU of whatsoever reckoner organisation; modern x86-64 processors easily support all of the math required and accept defended parts in them for such things. However, doing this work to render one frame involves a lot repetitive calculations and demands a significant amount of parallel processing. CPUs aren't ultimately designed for this, as they're far likewise general by required design. Specialized chips for this kind of work are, of course, called GPUs (graphics processing units), and they are congenital to do the math needed by the likes DirectX, OpenGL, and Vulkan very speedily and hugely in parallel.

One manner of demonstrating this is by using a benchmark that allows united states of america to render a frame using a CPU so using specialized hardware. We'll utilize 5-ray Adjacent past Anarchy Group; this tool actually does ray-tracing rather than the rendering we've been looking at in this article, just much of the number crunching requires similar hardware aspects.

To gain a sense of the difference between what a CPU can practise and what the right, custom-designed hardware can reach, we ran the 5-ray GPU benchmark in three modes: CPU only, GPU only, and and then CPU+GPU together. The results are markedly different:

- CPU only exam = 53 mpaths

- GPU just test = 251 mpaths

- CPU+GPU test = 299 mpaths

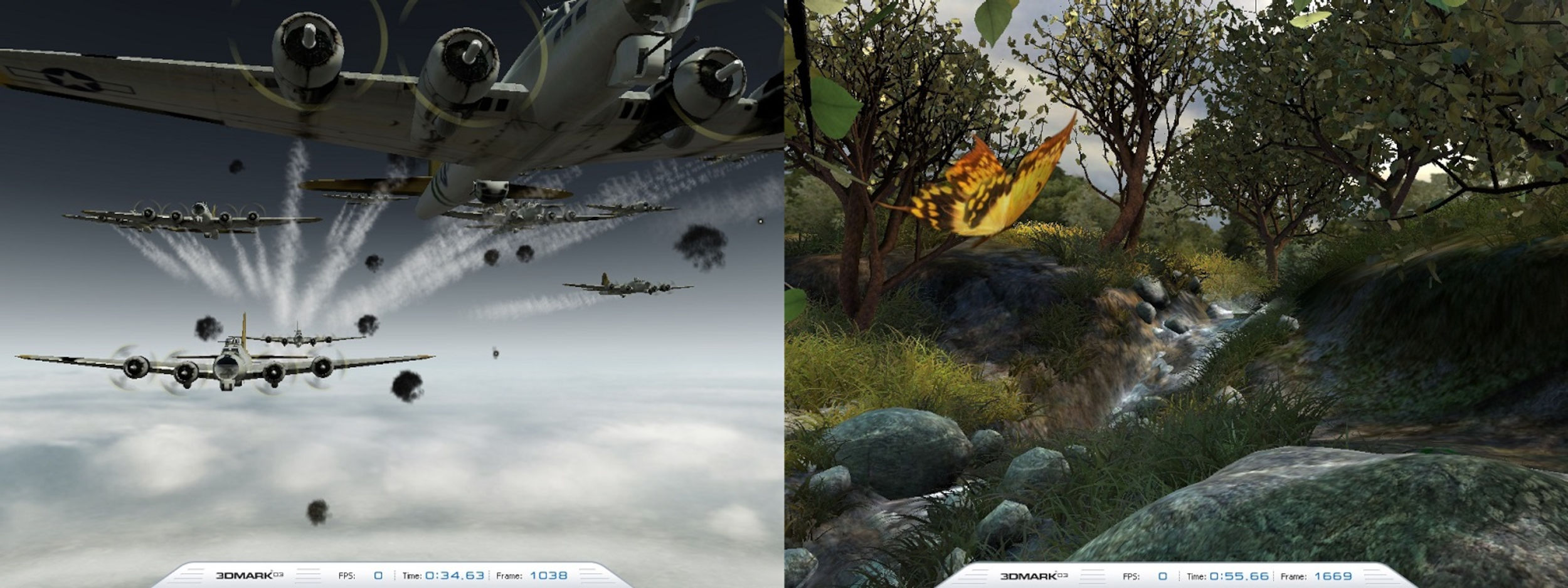

Nosotros tin ignore the units of measurement in this criterion, as a 5x difference in output is no piffling matter. But this isn't a very game-similar examination, so let'southward try something else and go a fleck quondam-school with Futuremark'southward 3DMark03. Running the simple Wings of Fury test, nosotros can force information technology to practice all of the vertex shaders (i.e. all of the routines washed to motion and color triangles) using the CPU.

The event shouldn't really come equally a surprise but nevertheless, information technology'south far more pronounced than nosotros saw in the V-ray test:

- CPU vertex shaders = 77 fps on average

- GPU vertex shaders = 1580 fps on average

With the CPU handling all of the vertex calculations, each frame was taking 13 milliseconds on average to be rendered and displayed; pushing that math onto the GPU drops this time right downwardly to 0.vi milliseconds. In other words, it was more than 20 times faster.

The difference is even more remarkable if we try the about complex test, Female parent Nature, in the benchmark. With CPU candy vertex shaders, the average result was a paltry 3.i fps! Bring in the GPU and the average frame charge per unit rises to 1388 fps: near 450 times quicker. Now don't forget that 3DMark03 is xvi years one-time, and the test only candy the vertices on the CPU -- rasterization and the pixel stage was even so done via the GPU. What would information technology be like if it was modernistic and the whole lot was done in software?

Let'south try Unigine'south Valley benchmark tool once more -- it's relatively new, the graphics it processes are very much similar those seen in games such equally Ubisoft'south Far Cry v; it likewise provides a total software-based renderer, in addition to the standard DirectX 11 GPU route. The results don't need much of an assay but running the lowest quality version of the DirectX 11 exam on the GPU gave an boilerplate result of 196 frames per 2nd. The software version? A couple of crashes aside, the mighty test PC ground out an average of 0.1 frames per 2d - near two k times slower.

The reason for such a difference lies in the math and data format that 3D rendering uses. In a CPU, information technology is the floating betoken units (FPUs) inside each cadre that perform the calculations; the test PC'south i7-9700K has viii cores, each with two FPUs. While the units in the Titan X are different in pattern, they can both do the same fundamental math, on the same data format. This particular GPU has over 3500 units to do a comparable calculation and even though they're not clocked anywhere near the same equally the CPU (1.five GHz vs 4.7 GHz), the GPU outperforms the central processor through sheer unit of measurement count.

While a Titan 10 isn't a mainstream graphics menu, even a budget model would outperform whatever CPU, which is why all 3D games and APIs are designed for dedicated, specialized hardware. Experience gratuitous to download V-ray, 3DMark, or any Unigine benchmark, and exam your ain system -- post the results in the forum, so we tin run into just how well designed GPUs are for rendering graphics in games.

Some final words on our 101

This was a curt run through of how ane frame in a 3D game is created, from dots in space to colored pixels in a monitor.

At its most fundamental level, the whole process is nada more than working with numbers, because that'southward all computer do anyway. However, a great deal has been left out in this article, to keep information technology focused on the basics (we'll likely follow upwards after with deeper dives into how computer graphics are made). We didn't include any of the actual math used, such as the Euclidean linear algebra, trigonometry, and differential calculus performed past vertex and pixel shaders; we glossed over how textures are processed through statistical sampling, and left aside cool visual effects similar screen space ambient occlusion, ray trace de-noising, loftier dynamic range imaging, or temporal anti-aliasing.

But when yous side by side fire up a round of Call of Mario: Deathduty Battleyard, nosotros hope that non only volition you see the graphics with a new sense of wonder, but you'll be itching to discover out more.

Go along reading the full 3D Game Rendering series

Part 0: 3D Game Rendering 101

The Making of Graphics Explained

Function 1: 3D Game Rendering: Vertex Processing

A Deeper Dive Into the World of 3D Graphics

Role 2: 3D Game Rendering: Rasterization and Ray Tracing

From 3D to Flat 2nd, POV and Lighting

Function 3: 3D Game Rendering: Texturing

Bilinear, Trilinear, Anisotropic Filtering, Bump Mapping, More

Part 4: 3D Game Rendering: Lighting and Shadows

The Math of Lighting, SSR, Ambient Occlusion, Shadow Mapping

Role 5: 3D Game Rendering: Anti-Aliasing

SSAA, MSAA, FXAA, TAA, and Others

Source: https://www.techspot.com/article/1851-3d-game-rendering-explained/

Posted by: barajasyoub1995.blogspot.com

0 Response to "3D Game Rendering 101"

Post a Comment